How Do I Build a ReAct Agent in Python (Reasoning + Acting Loop)?

A ReAct agent isn't a framework. It's a pattern — and a small, ugly Python loop is enough to build one. If you understand what's happening inside that loop, every agent framework on the market suddenly makes sense, and you can debug the ones that don't work. Here's how to build a ReAct agent from scratch in about 100 lines, with no dependencies beyond the Anthropic SDK.

Why this matters

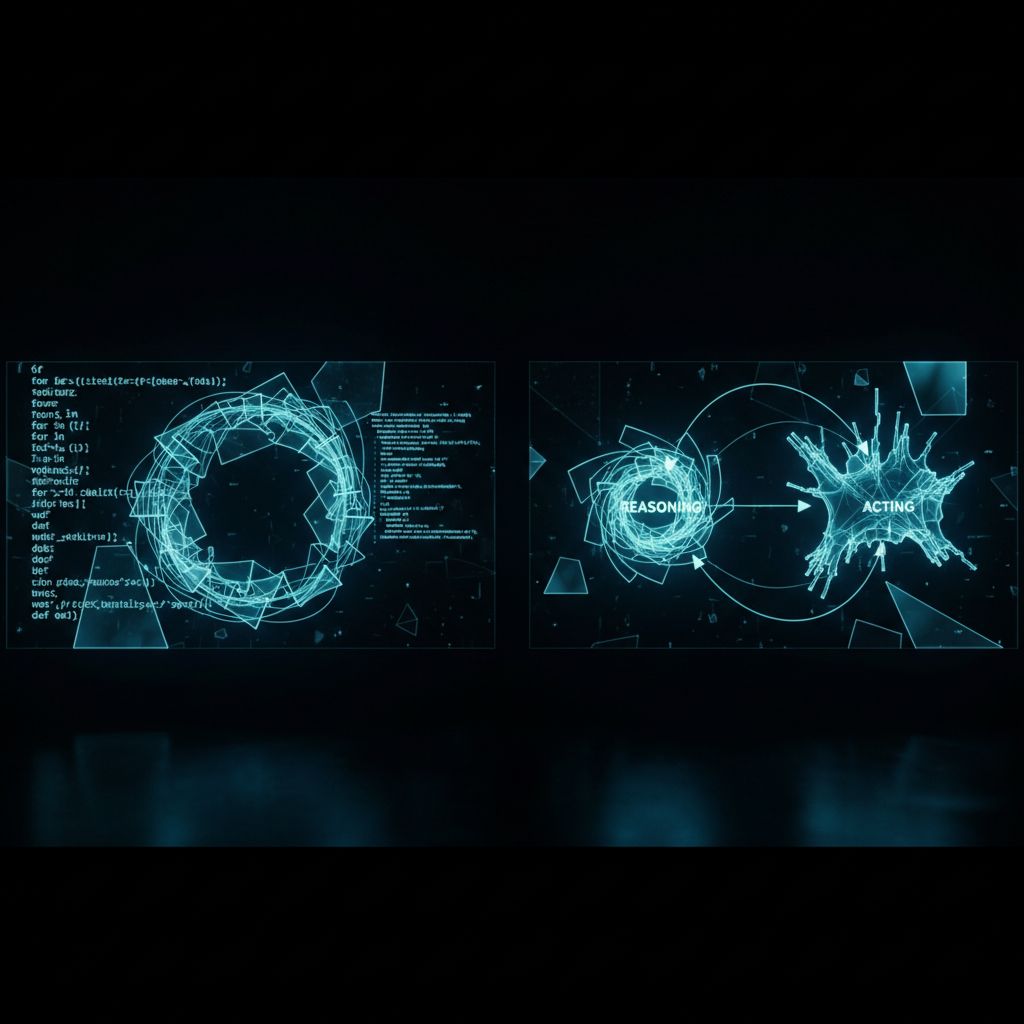

ReAct stands for Reasoning + Acting. Most LLM responses are one-shot — the model takes your prompt, generates an answer, done. ReAct is different: the model is allowed to think out loud, call a tool, see the result, and think again before answering.

Why this is the dominant agent pattern: it lets a small model solve big problems. Instead of cramming the whole problem into one prompt and praying, you give the model a few tools, a clear goal, and let it iterate. It works the way humans actually solve problems.

Most teams reach for LangGraph or AgentScope before they understand the loop those frameworks are wrapping. That's backwards. Build it once from scratch and the rest is library shopping.

Before you start

You need:

- Python 3.10+ and a virtualenv.

- An Anthropic API key.

export ANTHROPIC_API_KEY=sk-ant-... - The Anthropic SDK and

requestsfor the example tool. - About 30 minutes.

python -m venv .venv && source .venv/bin/activate

pip install anthropic requestsStep 1: Define the tools

A tool is a function the agent can call. Each tool has a JSON schema (so the model knows how to call it) and an implementation (so something actually happens).

import requests

def get_weather(city: str) -> str:

"""Get current weather for a city."""

r = requests.get(f"https://wttr.in/{city}?format=3")

return r.text.strip()

def calculator(expression: str) -> str:

"""Evaluate a Python math expression. Allowed: +, -, *, /, **, parentheses, numbers."""

safe = {"__builtins__": {}}

return str(eval(expression, safe, {}))

TOOLS = [

{

"name": "get_weather",

"description": "Get current weather conditions for a city.",

"input_schema": {

"type": "object",

"properties": {"city": {"type": "string"}},

"required": ["city"],

},

},

{

"name": "calculator",

"description": "Evaluate a math expression.",

"input_schema": {

"type": "object",

"properties": {"expression": {"type": "string"}},

"required": ["expression"],

},

},

]

TOOL_FNS = {"get_weather": get_weather, "calculator": calculator}Two tools is enough to demo the pattern. Real agents have 5–15.

Step 2: Write the loop

This is the core. A while loop that calls Claude, checks for tool calls, runs them, and feeds results back until Claude returns a final answer.

import json

from anthropic import Anthropic

client = Anthropic()

MODEL = "claude-sonnet-4-5-20250929"

MAX_ITERS = 8

def run_agent(user_message: str) -> str:

messages = [{"role": "user", "content": user_message}]

for step in range(MAX_ITERS):

response = client.messages.create(

model=MODEL,

max_tokens=1024,

tools=TOOLS,

messages=messages,

)

# If Claude returned a final answer (no tool calls), we're done.

if response.stop_reason == "end_turn":

for block in response.content:

if block.type == "text":

return block.text

return ""

# Otherwise, run any tool calls and append the results.

messages.append({"role": "assistant", "content": response.content})

tool_results = []

for block in response.content:

if block.type == "tool_use":

fn = TOOL_FNS[block.name]

try:

output = fn(**block.input)

except Exception as e:

output = f"ERROR: {e}"

tool_results.append({

"type": "tool_result",

"tool_use_id": block.id,

"content": output,

})

messages.append({"role": "user", "content": tool_results})

return "Agent hit max iterations without a final answer."Three pieces of work in this loop:

- Call Claude with the tools list. Claude either returns text (answer) or tool_use blocks (wants to call something).

- Run the requested tools. We grab each

tool_useblock, look up the function, run it, build atool_result. - Feed results back. Append assistant message + user message (with tool_results) and loop.

Step 3: Test it

if __name__ == "__main__":

print(run_agent("What's the weather in Austin and is it warmer than 25 * 3 degrees?"))Run it. You'll see Claude:

- Call

get_weatherfor Austin. - Call

calculatorwith25 * 3. - Compare the two and answer in plain language.

That's a ReAct loop. Two tool calls, one final answer, three round trips to the API.

Step 4: Add visibility

In production you want to see what the agent is thinking. Add logging that prints each step.

def run_agent_verbose(user_message: str) -> str:

messages = [{"role": "user", "content": user_message}]

for step in range(MAX_ITERS):

print(f"\n--- step {step + 1} ---")

response = client.messages.create(model=MODEL, max_tokens=1024, tools=TOOLS, messages=messages)

for block in response.content:

if block.type == "text":

print(f"[think] {block.text}")

elif block.type == "tool_use":

print(f"[call ] {block.name}({block.input})")

if response.stop_reason == "end_turn":

return next((b.text for b in response.content if b.type == "text"), "")

messages.append({"role": "assistant", "content": response.content})

results = []

for block in response.content:

if block.type == "tool_use":

out = TOOL_FNS[block.name](**block.input)

print(f"[obs ] {out}")

results.append({"type": "tool_result", "tool_use_id": block.id, "content": str(out)})

messages.append({"role": "user", "content": results})

return "Max iterations reached."This is the minimum viable observability — enough to debug 80% of agent failures without a full tracing stack.

Verify it worked

Two checks:

- The agent answers the test question correctly. Austin temp + comparison to 75. If it gets either wrong, your tool functions are wrong (test them in isolation first).

- The verbose log shows think → call → observe → answer. If you see only one think + answer, the agent isn't using tools — usually a schema bug. Check

input_schemaexactly matches what your function expects.

Step 5: Add the safety rails you'll forget otherwise

Three things every production ReAct loop needs that the demo above doesn't:

# 1. Token budget — don't let one user query burn a $20 model call.

TOTAL_TOKEN_CEILING = 50000

# 2. Tool timeout — wrap tool calls so a hung HTTP request doesn't block forever.

import concurrent.futures

def run_with_timeout(fn, kwargs, timeout=10):

with concurrent.futures.ThreadPoolExecutor() as ex:

return ex.submit(lambda: fn(**kwargs)).result(timeout=timeout)

# 3. Tool allowlist per user — never trust the agent's tool selection blindly in prod.

ALLOWED_TOOLS_PER_USER = {"jake": {"get_weather", "calculator"}}These aren't optional once real users hit your agent.

Where this breaks

- Tool functions that throw. A raised exception kills the loop unless caught. Wrap every tool call and return the error as a

tool_result— the agent will adjust. - Infinite loops on bad tools. A tool that returns "I don't know" forever causes the agent to keep retrying. Return concrete, structured errors so the agent has something to react to.

- Schema mismatch. The most common bug: your

input_schemasayscityis required, but Claude calls withlocation. Match the schema property names to your function's parameter names exactly. - Tool drift across model versions. A prompt + toolkit that runs clean on Sonnet 4.5 may loop on a smaller model. Test against the model you'll actually deploy.

What to try next

Let's talk about your AI + SEO stack

If you'd rather skip the how-to and have it shipped for you, that's what I do. Start a conversation and we'll figure out the fastest path to results.

Let's Talk